We observe a stark issue with the OCR solutions market: either tech teams are not adopting the new, unified LLM paradigm, or they overpay dramatically due to severe model mismatch. We put 18 models from 4 providers to the test in our OCR mini-bench, and collect the results in a business-focused leaderboard to stop decision makers from overpaying.

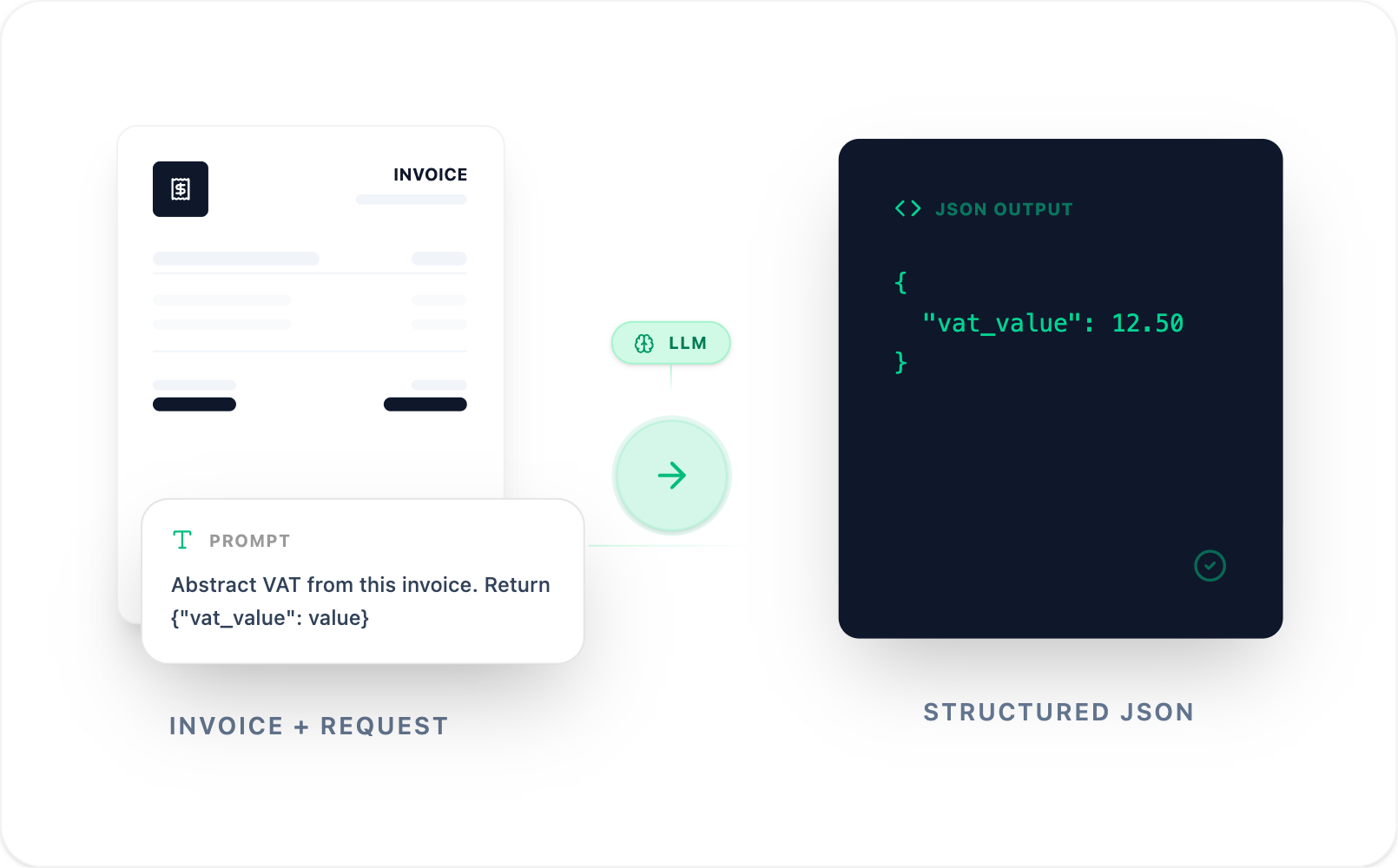

Abstracting information from documents is a core component of business automation workflows. Traditionally, this problem is tackled in two steps:

- Abstract words and numbers from a document or image; OCR models.

- Match the abstracted text to structured entities through pattern matching; regex.

Such legacy solutions quickly become complex and bloated. The OCR models are difficult to steer, and the pattern matching regex explodes into hundreds of lines of code - trying to capture every edge case.

The ever increasing performance of LLMs, in particular their multi-modal capabilities, present a simple, unified approach to the information abstraction process. These models take in an image (pdf, png, ...) together with a written request about the image, and return a structured answer.

This results in a steerable OCR process, and bypasses the majority of regex pattern matching. It replaces black-box models and hundreds of lines of regex code with a single system prompt. In many business-cases, it is an overall superior solution. Yet, many teams have not caught up yet, or are paying far more than they should.

How teams leave significant upside on the table

IT departments in legacy enterprises are biased towards the incumbent tech stack. They have settled for ways to manually work around the shortcomings of their old solutions. Small to mid-sized tech companies that are not disadvantaged by carrying old baggage, are much more readily adopting the LLM-first approach. Yet, despite their agile and fast approach, they are massively overpaying due to a mismatch between specific use-case and choice of model.

The LLM market is settling into a steady release cycle of new models. Every month at least one of the big labs releases their newest model, eclipsing its previous versions (and those of their competitors). As a result, tech leaders are faced with a choice between a growing list of models across a handful of providers. What happens is that they adopt one provider, and settle on the state-of-the-art (SotA) model at that time. After a couple of successful tests they are confident the SotA model can handle their specific scenario: case closed.

However, whether the state-of-the-art model can solve your problem is perhaps the wrong question to ask. A more business-oriented approach is to look for the optimal model that fulfils your requirements. Indeed, older, smaller, and much cheaper models can perform just as well as the newest versions - and at a fraction of the cost. This is especially the case for standard tasks, which covers an immense segment of business cases.

Many tech teams are dramatically overpaying for models that are overkill for their use-case.

OCR Mini-bench and corresponding leaderboard help settle this question with data.

OCR Mini-bench

The premise is simple: how do models - across different providers - compare against each other for information abstraction capabilities for standard documents. The procedure aligns with our core vision: simulate a realistic, business-relevant environment; measure business-metrics that matter; adhere to compliance and statistical rigour.

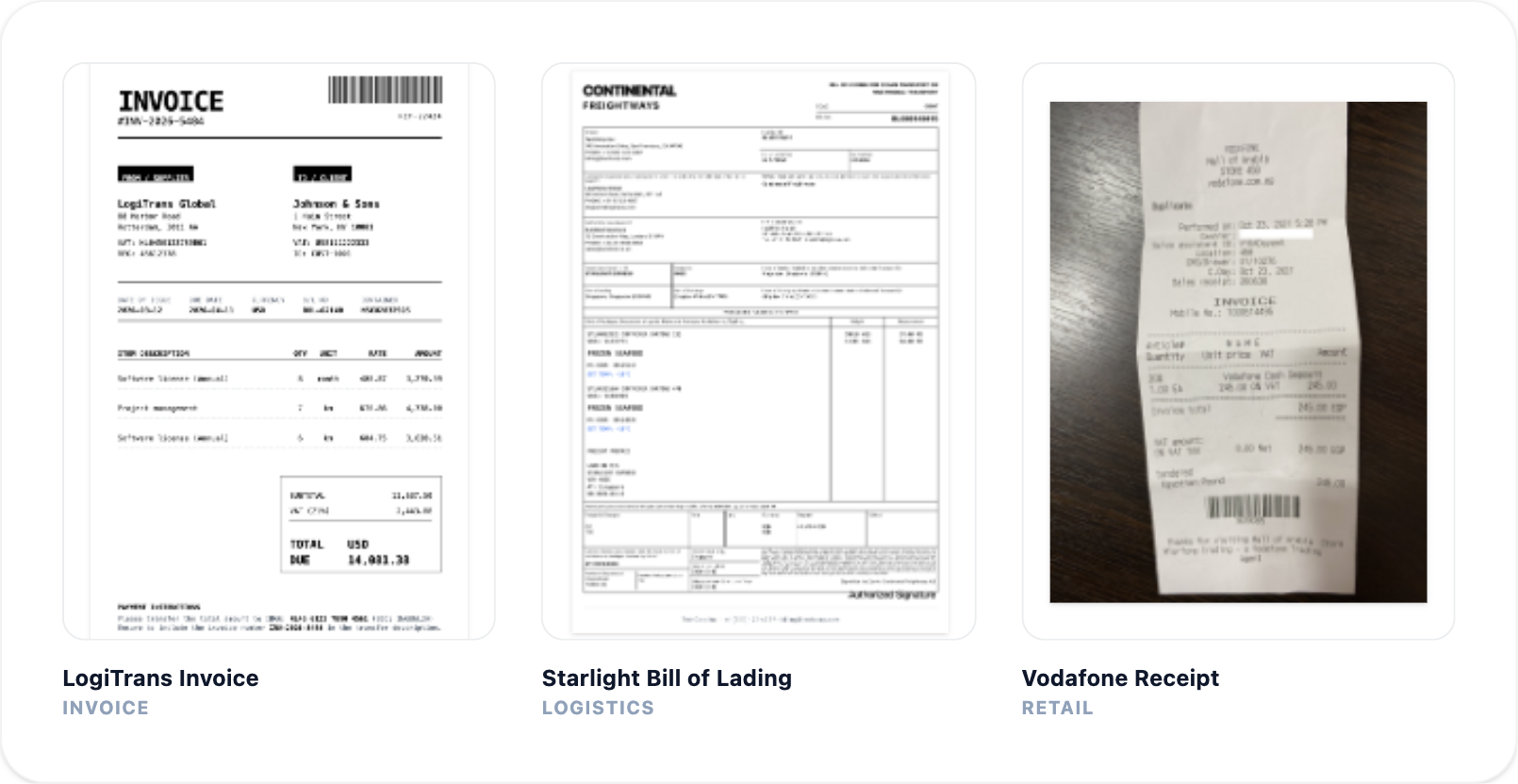

We construct a dataset that consists of three document types that represent a broad section of the target market. The documents form a diverse, realistic set of a complexity that covers the majority of documents encountered in business settings.

- Receipts: 13 images randomly picked from a standard, open-source dataset.[1]

- Invoices: 12 PDF invoices spanning different fields and complexities.

- Logistics: 9 Bills of Lading, 8 Transport Orders.

Despite invoices, bills of lading, and transport orders being notoriously difficult to share due to privacy constraints, we did not want to give up on realism. Therefore, all documents are based on real templates and information density, but with all data synthesised. This stringent procedure ensures realism is maintained, while at the same time allows us to fully open-source the dataset.

AI solutions get their value from operating in real world business-processes. To simulate production scale we go beyond the one-shot paradigm adopted by many other benchmarks. Instead of answering the question "Can the model solve the task once?", we ask "Given the same input, how much does the model performance deteriorate over many runs." To get such a measure of reliability, we present every model each document 10 times under identical circumstances and track its performance.

We focus on performance metrics that directly translate into business insights, going beyond the traditional academic measures. Some examples:

- Critical fields: For each document, we define a set of critical fields that present the minimum information required to make sense of the document within its business context. Without abstracting all of these fields, the automation workflow cannot be considered viably from a business perspective.

- Cost-per-success: We go beyond the cost-per-token by acknowledging that LLMs sometimes fail the task presented to them. Yet, pass or fail, the tokens are paid for. Therefore, cost-per-success measures the economic viability of a model more accurately.

- Pass^n: A metric first proposed by Sierra's tau-bench team. It measures the deterioration of the model success during subsequent calls. It is a metric of model reliability at scale.

Leaderboard

Every model provider is represented by three tiers of models: State-of-the-art, balanced, and a value-optimised option. OpenAI, Anthropic, Google, and Mistral all have models fitting this categorisation, where capabilities and price go hand in hand.

One full sweep consists of providing every document to each model, 10 times in a row under identical conditions. This results in a total coverage of 5880 LLM API calls. Once complete we compare the output with a manually-labeled ground truth to judge the performance.

The full results are published on the OCR Mini-bench leaderboard page. An overall trend is clear:

for standard OCR tasks the older, small models perform just as well as state-of-the-art models, at a fraction of the cost.

Sometimes the cost difference reaches two digit multiples! Mapping such number onto processing thousands of documents per month results in significant cost-savings that cannot be neglected.

As LLMs become a core part of most technology stacks, tech teams must become aware of model (cost) dynamics. Being model agnostic, running internal benchmarks and building regression sets are all part of the modern approach to robust AI solutions.

Open Source + free trial

The benchmark framework and dataset are made completely open-source to promote replicability by others. The repository can be used to benchmark your own, custom dataset. Alternatively, the framework can be tailored to run the benchmark on your own, custom models. When you do - with the same dataset and system prompt - we can add your model to the leaderboard after a short verification. Curious how models compare on your own documents? Test them in our free OCR flow.

Note that caching is explicitly enabled for all models providers that support it. Batching has been omitted due to considering latency as a relevant business metric.